In the rapidly evolving field of optimization algorithms, a novel approach has emerged, combining evolutionary principles with the capabilities of Large Language Models (LLMs). This novel framework, known as LLaMEA (Large Language Model Evolutionary Algorithm), presents an innovative method for automatically generating metaheuristic optimization algorithms. Let’s delve into how this approach works and why it holds significant promise for various industries.

The Genesis of LLaMEA

Developed by Niki van Stein and Thomas Bäck, LLaMEA leverages the power of LLMs like GPT-4 to create, mutate, and refine metaheuristic algorithms. Metaheuristics are strategies designed to find optimal solutions by exploring and exploiting the search space, often used in complex optimization problems where traditional methods fall short. By integrating LLMs, LLaMEA automates this process, generating new algorithms iteratively and selecting the best-performing ones based on predefined metrics.

How LLaMEA Works

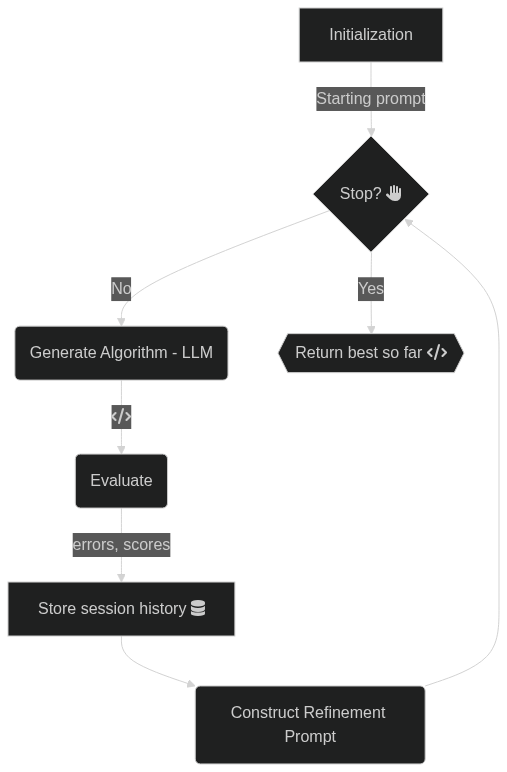

- Algorithm Generation: The LLM generates a an initial metaheuristic algorithm based on a short problem description (the starting prompt).

- Evaluation: Each algorithm is tested against a wide set of benchmark optimization problems.

- Selection and Mutation: The best-performing (or last generated) algorithm is selected and mutated to create new variants.

- Iteration: This process repeats, continuously improving the algorithms through evolutionary principles.

Key Advantages

Automation of Expertise

Traditionally, developing effective metaheuristics requires extensive expertise and experimentation. LLaMEA reduces the reliance on human expertise by automating the creation and refinement process, making advanced optimization accessible to a broader audience.

Enhanced Performance

The iterative nature of LLaMEA ensures continuous improvement, often surpassing state-of-the-art optimization algorithms. This capability is particularly beneficial for industries dealing with complex optimization challenges, such as logistics, finance, artificial intelligence, and engineering.

Scalability

LLaMEA can be scaled to address a wide range of optimization problems, from small-scale industrial applications to large-scale global challenges. Its adaptability and robustness make it a versatile tool in the optimization toolkit.

Implications for the Future

The introduction of LLaMEA marks a milestone in the evolution of optimization algorithms. By combining the strengths of evolutionary algorithms and large language models, this framework opens new avenues for innovation and efficiency. As industries continue to face increasingly complex optimization problems, tools like LLaMEA will play a crucial role in driving technological advancements and operational efficiencies.

Conclusion

LLaMEA exemplifies the transformative potential of integrating advanced AI models with evolutionary principles. This innovative approach not only enhances the performance of optimization algorithms but also democratizes access to cutting-edge optimization techniques. While there is still a lot of work that can be done to extend the LLaMEA framework, such as different population sizes, different selection mechanisms and combining different LLMs, the ground work has been done and is available for practisioners and researchers alike to explore and improve upon.

Explore the full details of this research in the original paper by Niki van Stein and Thomas Bäck here.

The code is available on Github.

]]>